Understanding the challenge of ethical AI: how to avoid being racist, sexist or ageist | WARC | The Feed

The Feed

Read daily effectiveness insights and the latest marketing news, curated by WARC’s editors.

You didn’t return any results. Please clear your filters.

Understanding the challenge of ethical AI: how to avoid being racist, sexist or ageist

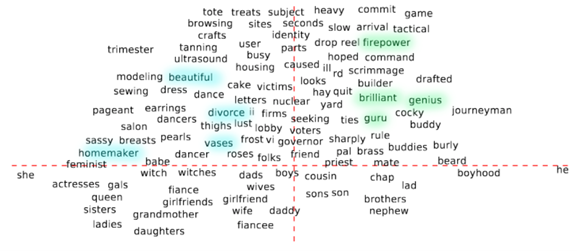

Brands need to overcome biased outcomes in marketing and to be especially alert to the unintended biases that can creep into artificial intelligence and that can spell risk for business; three strategy executives from Initiative APAC explain how to tackle the issue.

Why it matters

Algorithms learn from distorted data and the way it is collected and organised. As brands adopt AI automation, they have to be aware of ethical biases and how to avoid biased outcomes in business and marketing. Algorithms’ (seemingly) objective decisions and representations of “good” as a concept inevitably solidify their position as an intermediary of...

This content is for subscribers only.

Sign in or book a demo to continue reading WARC’s unbiased, evidence-based insights that save you time and help you make marketing choices that work.

Email this content